Camp Twin Lakes (CTL) serves campers who have medical illnesses, disabilities, and other life challenges at two year-round camp locations in Georgia. CTL operates as a partner model camp, serving over 60 partner organizations each year. CTL’s partners are various types of nonprofit organizations who bring the populations that they serve to CTL’s facilities for camping programs.

During summer 2020, Camp Twin Lakes transitioned to virtual summer camp in light of the coronavirus pandemic. In the following article, they detail their team’s process in measuring the virtual program’s impact on campers. They discuss outcomes terminology and defining CTL’s camper outcomes. Then they discuss the virtual camp program, data collection process, and what they learned from the data.

Outcomes, Research, and Program Quality

To measure the impact of camp, our team has come to understand the importance of multiple approaches: outcomes measurement, program quality initiatives, and research studies. We learned that we do not need to conduct formal research studies to measure the impact of camp. Unless we want the knowledge gained to be extrapolated to other camping programs and published for the benefit of the camping community, we can measure CTL’s camper outcomes for the purpose of program improvement. These camper outcomes can be communicated to our key constituents: Partners, board members, donors, and parents.

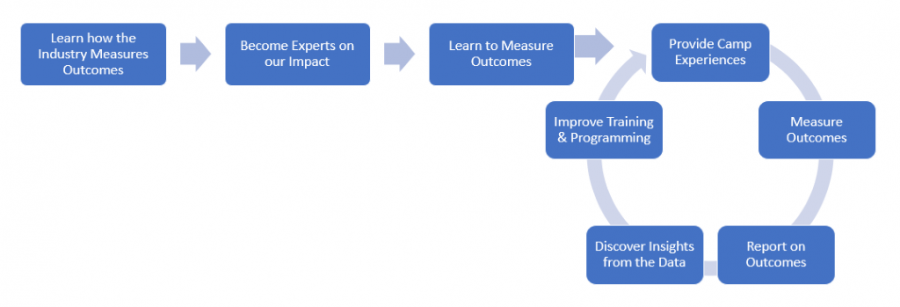

Combining an outcomes measurement system with a program quality initiative gives our team the tools to collect high-quality information and consistently make program improvements. Then we ask more questions, collect more data, and improve the program again. And again. That is our goal: to create a culture of inquiry among our staff and embed data-driven program improvements into the ecosystem of CTL operations. When we are using data to drive program improvements, our impact on campers deepens.

Camp Twin Lakes Outcomes Journey

Our team designed this diagram to reflect the process we embarked on when we began this work more than one year ago. It was important for us to learn how other nonprofit organizations measure their impact and interview CTL staff and campers to understand our own impact. Without this knowledge base, it would have been difficult to accurately define our outcomes and decide what to measure.

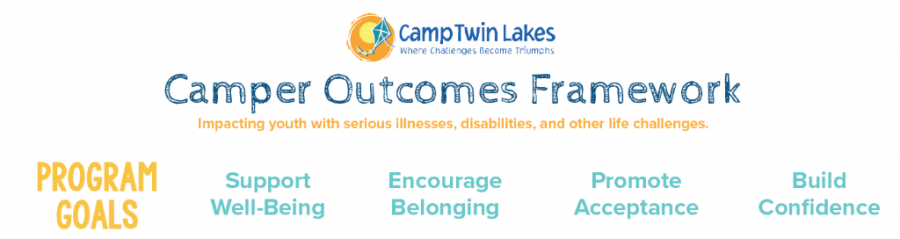

Our staff team, a board committee, and a dedicated staff taskforce invested a significant amount of time into defining our Camp Twin Lakes camper outcomes. We gained insight from other camping professionals, the Georgia Center for Nonprofits, and the research team at ACA. The result of this work is our Camper Outcomes Framework (below). This defines our program goals and camper outcomes across the various populations we serve. The specific indicators for each outcome are unique to each camper population, but this framework gives us guardrails in designing and measuring the effectiveness of our programs and their impact on campers.

Virtual Camping Program

Due to COVID-19 and the cancellation of our in-person summer camp programs, Camp Twin Lakes licensed a learning management system, and our partner organizations could choose to opt into this virtual camp program. During a camper's typical in-person camp week, they had access to the platform’s pre-recorded video content, games, and activities. Campers could participate in the activities from home using everyday household items. Additionally, there were synchronous sessions including daily spirit time, cabin chats, and evening programs.

The work we did preparing for collecting outcomes data in person made us perfectly prepared to transition this initiative online. Our program team used the camper framework to design intentional virtual activities. Each online content piece was linked with an outcome from the diagram above, and staff worked toward the program goal associated with that outcome.

Virtual Camping Outcomes & Data Collection

The benefit of campers accessing content on a platform is the quantity of data available. We could pull a report on any day of the summer and see who logged in, how many seconds they were logged in, which activities they accessed, and how they interacted with other campers. But there are pros and cons to having so much data. It is time-intensive to pull all available data, and analyzing all of it wouldn’t result in useful information, so our team decided to collect data from virtual camp for specific goals: camper outcomes, platform feedback, program feedback, and general satisfaction.

Campers, volunteers, and parents each answered questions around satisfaction, outcomes, and platform feedback. The survey questions around program feedback helped our team understand the popularity of different activities across age groups. The questions related to platform feedback gave us data related to ease of use, as well as parent and camper perceptions of feeling safe online. For camper outcomes, we utilized specific agree/disagree statements to measure improvement in each of the four targeted outcome areas. Specifically related to outcomes, campers were asked about changes in themselves, parents were asked about changes in their campers, and volunteers were asked about changes in themselves (our volunteer experience is designed around intentional volunteer outcomes that we won’t have time to discuss in this article).

Then we built a camper usage report that could be generated with specific regularity to give us feedback on usage of various online activities and camper engagement. The camper usage report gave us insight into camper participation, number of logins, and time spent on the platform. These data points, together with the survey results, painted the picture of the camper’s experience on the virtual camp platform.

Although we would love to create a 50-question camper survey asking everything we wish we knew, this just wasn’t practical. Instead we picked the most important data points and condensed the camper survey to 10 questions. Campers had to complete the survey on the platform in order to access the final day’s content, and we didn’t want campers logging out to avoid the survey and skipping the final day of camp, so we intentionally kept the survey short and did not ask open-ended questions. Because the platform’s survey logic did not allow for varying questions based on previous answers, our team standardized the questions to apply to all campers. After testing the survey with kids of varying ages, we made tweaks to word choice and adjusted question types for reading comprehension. These steps ensured an easily accessible survey for campers of every ability level.

At our typical in-person summer camp, we also survey our Partners to ask about their camp experience and our working relationship with them. In our virtual context, we changed this to a post-camp meeting with standard questions. This interview style allowed for quicker feedback and the ability to implement changes to the virtual camp program in real time throughout the summer. Within one week of the end of the summer camp session, we assembled each group’s camper usage, camper survey, parent survey, and volunteer survey data and sent this information to the Partner organization in a standard report.

What Did We Learn?

The benefit of beginning an entirely new program is that there is no preset definition of success. The program is brand new, so the data analysis process is an exciting opportunity to learn and enhance the program quickly. Additionally, our team is interested in continuing the virtual program for hospital outreach, and this data helps us understand program value using camper outcomes. We are equipped to continuously improve the program to deepen the impact on our campers moving forward.

We learned a lot through this process, and we are still going through the data, but there are already some key takeaways. First, community is the most important part of camp. 68% of our campers chose synchronous sessions as their favorite activities. This means that campers enjoy utilizing the platform for live engagement with the camp community more than asynchronous video content. Second, confidence building is feasible in an online environment. 83% of campers tried something new, and 87% of campers learned something new because of our online content. Both of these are key indicators in improving our camper outcome of self-efficacy. Finally, getting campers onto the platform for the first time is the biggest hurdle to their engagement in the program. 71% of campers who logged in at least one time logged in at least 5 times during the week.

With this data collection strategy, our team has a good understanding of the virtual camp experience, and we have identified action steps to improve the virtual program moving forward. We will continue collecting data through this virtual platform and refining our approach. And at the end of the day, whether the camp program is in person or virtual, our team is committed to collecting outcomes data, measuring program quality, and continuously improving our programs to deepen our impact on our campers.

If you are interested in learning more about measuring impact for your organization, two books we recommend are Nonprofit Outcomes Toolbox by Robert Penna and Measuring Program Outcomes: A Practical Approach by United Way of America. Additionally, the ACA research blog has great articles about designing outcomes measurement using logic models.

Michelle Bevan is the project manager for research and outcomes at Camp Twin Lakes. She manages CTL’s program quality improvement and the research and outcomes initiative that demonstrates the impact of camp.

Thanks to our research partner, Redwoods.

Additional thanks goes to our research supporter, Chaco.

The views and opinions expressed by contributors are their own and do not necessarily reflect the views of the American Camp Association or ACA employees.